AI-Enabled Prototyping

![]()

Overview

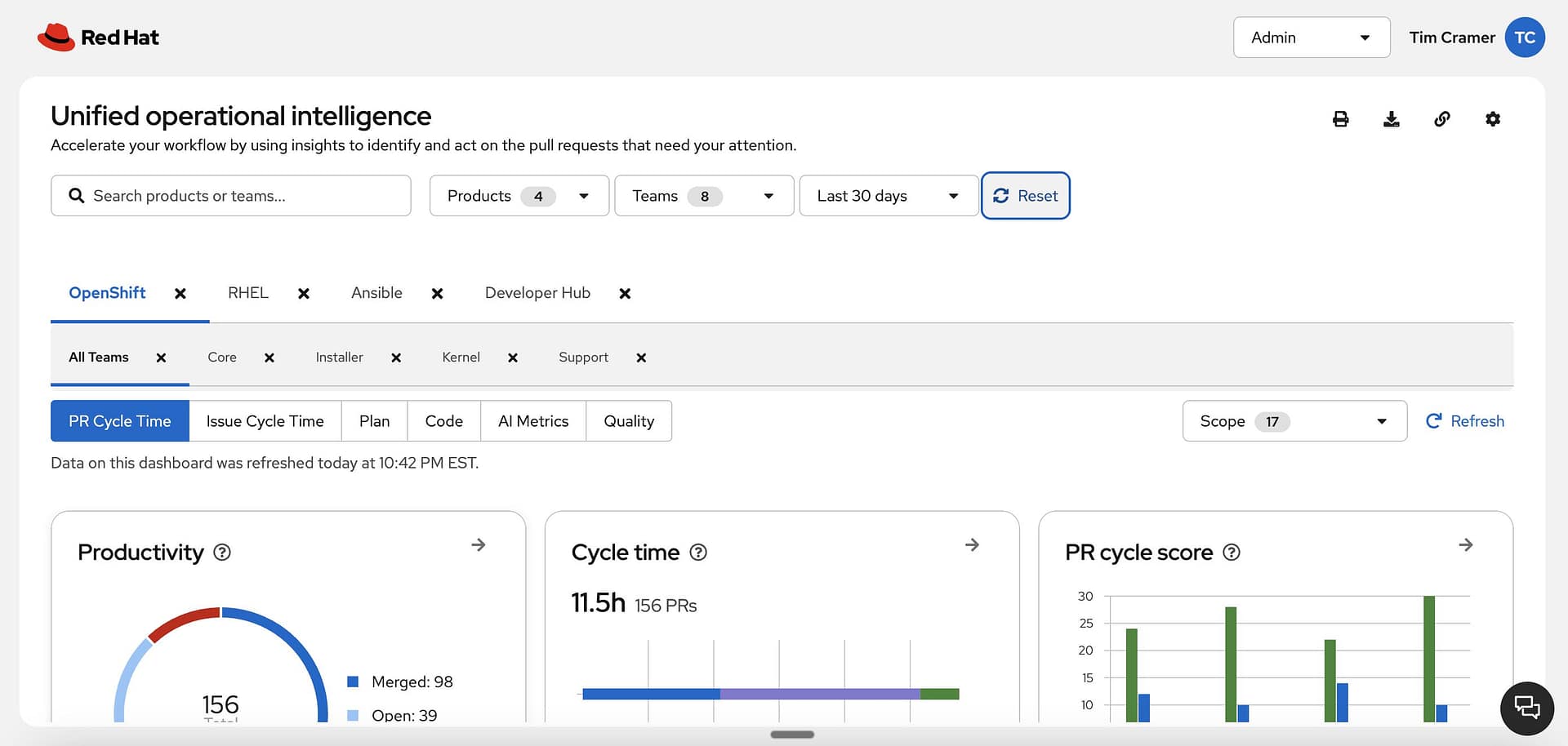

AI adoption was becoming a broader organizational priority, with growing pressure across teams to improve speed, quality, and operational decision-making. One important opportunity was failure analysis before code release: helping teams understand where risks were surfacing, how internal signals were performing, and what needed attention before issues reached production.

This work sat at the intersection of UX, engineering operations, and AI-enabled workflows. It was not simply about making information visible. It was about creating a clearer way for teams to interpret risk, prioritize action, and trust what the system was telling them.

![]()

Challenge

Failure-analysis signals already existed, but the experience around them was fragmented. Important context lived across disconnected tools, workflows, and systems, which made it hard for teams to answer basic questions quickly:

- What is going wrong?

- How serious is it?

- Where should we focus first?

- What requires follow-up before release?

- How much confidence should we place in the signal?

Because the experience was fragmented, teams had limited visibility, inconsistent trust in AI-supported recommendations, and no unified way to act efficiently. Leadership also lacked a clear view across teams and patterns.

The goal was to reduce customer support tickets by 20%, improve team efficiency by 30%, and create a scalable model that could support 100+ teams.

![]()

My role

Create a Unified operational intelligence (ai-enabled prototype) experience that gives engineering teams and leadership clear visibility into failure analysis before code is pushed live. The opportunity was to turn fragmented internal signals into a trusted, actionable system that could improve decision-making, strengthen adoption of AI-supported workflows, and scale more efficient operations across the organization.

The real challenge was not just that information was scattered. It was that users could not easily move from signal to understanding to action. The design opportunity was to create a clear, intelligent, and testable experience that helped teams interpret risk faster, prioritize next steps, and build trust in AI-supported recommendations.

At the same time, the work exposed a broader shift in design practice. A traditional linear UX process, where research, wireframes, handoff, and validation happen one after another, was not enough for this kind of dynamic systems work. The pace and complexity required a more integrated model where design, prototyping, workflow logic, and validation could evolve together.

Strategy

I approach this challenge as a systems-thinking practice where AI-enabled experiences, automation, and optimization work together to solve complex problems more intelligently. Rather than relying only on traditional linear workflows, I use more executable methods that allow design, prototyping, logic, and validation to evolve together. This helps reduce friction, improve clarity, accelerate learning, and create experiences that are not only usable, but operationally effective and ready to scale.

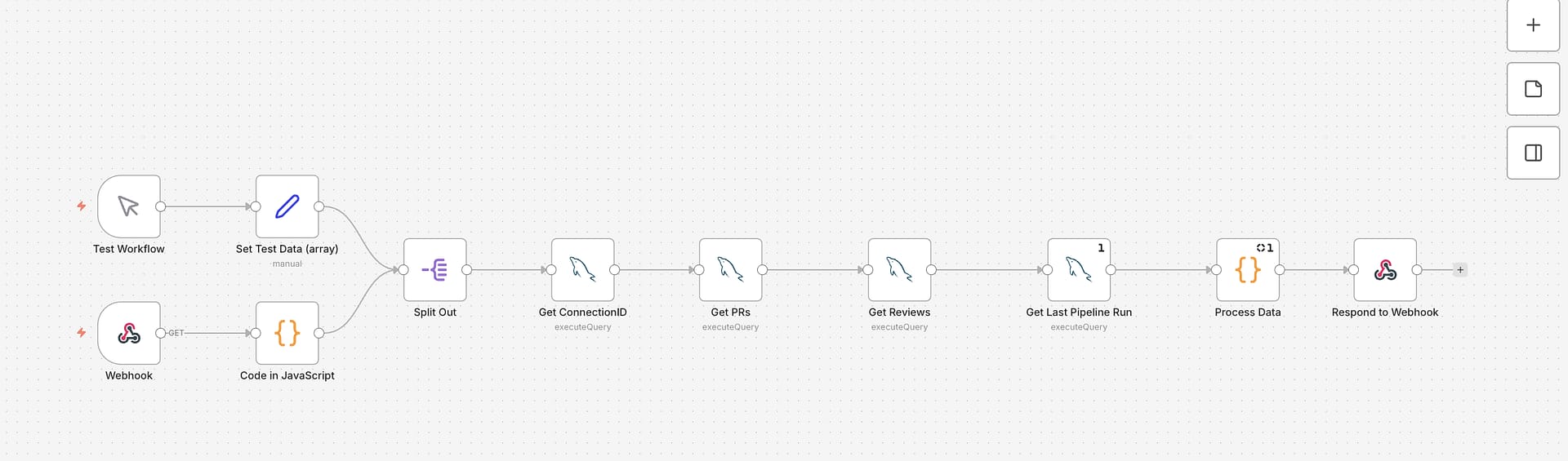

Used rapid prototyping to move quickly from concept to something more tangible and testable. Rather than relying on static mockups, connected the prototype to live workflow logic through MCP servers and an n8n agentic automation flow, which made it possible to test with more realistic data conditions and likely outcomes.

Rather than treating design as a sequence of static outputs, I worked in a more integrated loop: framing, shaping, prototyping, and validating in parallel. This allowed me to reduce ambiguity earlier and make the work more tangible for the team.